WaveForm na IOS

Szukam sposobu na narysowanie amplitudy dźwięku.

5 answers

Dziękuję wszystkim.

Znalazłem ten przykład tutaj: rysowanie waveform za pomocą AVAssetReader , zmieniłem go i rozwinąłem nową klasę opartą na.

Ta klasa zwraca UIImageView.

//.h file

#import <UIKit/UIKit.h>

@interface WaveformImageVew : UIImageView{

}

-(id)initWithUrl:(NSURL*)url;

- (NSData *) renderPNGAudioPictogramLogForAssett:(AVURLAsset *)songAsset;

@end

//.m file

#import "WaveformImageVew.h"

#define absX(x) (x<0?0-x:x)

#define minMaxX(x,mn,mx) (x<=mn?mn:(x>=mx?mx:x))

#define noiseFloor (-50.0)

#define decibel(amplitude) (20.0 * log10(absX(amplitude)/32767.0))

#define imgExt @"png"

#define imageToData(x) UIImagePNGRepresentation(x)

@implementation WaveformImageVew

-(id)initWithUrl:(NSURL*)url{

if(self = [super init]){

AVURLAsset * urlA = [AVURLAsset URLAssetWithURL:url options:nil];

[self setImage:[UIImage imageWithData:[self renderPNGAudioPictogramLogForAssett:urlA]]];

}

return self;

}

-(UIImage *) audioImageLogGraph:(Float32 *) samples

normalizeMax:(Float32) normalizeMax

sampleCount:(NSInteger) sampleCount

channelCount:(NSInteger) channelCount

imageHeight:(float) imageHeight {

CGSize imageSize = CGSizeMake(sampleCount, imageHeight);

UIGraphicsBeginImageContext(imageSize);

CGContextRef context = UIGraphicsGetCurrentContext();

CGContextSetFillColorWithColor(context, [UIColor blackColor].CGColor);

CGContextSetAlpha(context,1.0);

CGRect rect;

rect.size = imageSize;

rect.origin.x = 0;

rect.origin.y = 0;

CGColorRef leftcolor = [[UIColor whiteColor] CGColor];

CGColorRef rightcolor = [[UIColor redColor] CGColor];

CGContextFillRect(context, rect);

CGContextSetLineWidth(context, 1.0);

float halfGraphHeight = (imageHeight / 2) / (float) channelCount ;

float centerLeft = halfGraphHeight;

float centerRight = (halfGraphHeight*3) ;

float sampleAdjustmentFactor = (imageHeight/ (float) channelCount) / (normalizeMax - noiseFloor) / 2;

for (NSInteger intSample = 0 ; intSample < sampleCount ; intSample ++ ) {

Float32 left = *samples++;

float pixels = (left - noiseFloor) * sampleAdjustmentFactor;

CGContextMoveToPoint(context, intSample, centerLeft-pixels);

CGContextAddLineToPoint(context, intSample, centerLeft+pixels);

CGContextSetStrokeColorWithColor(context, leftcolor);

CGContextStrokePath(context);

if (channelCount==2) {

Float32 right = *samples++;

float pixels = (right - noiseFloor) * sampleAdjustmentFactor;

CGContextMoveToPoint(context, intSample, centerRight - pixels);

CGContextAddLineToPoint(context, intSample, centerRight + pixels);

CGContextSetStrokeColorWithColor(context, rightcolor);

CGContextStrokePath(context);

}

}

// Create new image

UIImage *newImage = UIGraphicsGetImageFromCurrentImageContext();

// Tidy up

UIGraphicsEndImageContext();

return newImage;

}

- (NSData *) renderPNGAudioPictogramLogForAssett:(AVURLAsset *)songAsset {

NSError * error = nil;

AVAssetReader * reader = [[AVAssetReader alloc] initWithAsset:songAsset error:&error];

AVAssetTrack * songTrack = [songAsset.tracks objectAtIndex:0];

NSDictionary* outputSettingsDict = [[NSDictionary alloc] initWithObjectsAndKeys:

[NSNumber numberWithInt:kAudioFormatLinearPCM],AVFormatIDKey,

// [NSNumber numberWithInt:44100.0],AVSampleRateKey, /*Not Supported*/

// [NSNumber numberWithInt: 2],AVNumberOfChannelsKey, /*Not Supported*/

[NSNumber numberWithInt:16],AVLinearPCMBitDepthKey,

[NSNumber numberWithBool:NO],AVLinearPCMIsBigEndianKey,

[NSNumber numberWithBool:NO],AVLinearPCMIsFloatKey,

[NSNumber numberWithBool:NO],AVLinearPCMIsNonInterleaved,

nil];

AVAssetReaderTrackOutput* output = [[AVAssetReaderTrackOutput alloc] initWithTrack:songTrack outputSettings:outputSettingsDict];

[reader addOutput:output];

[output release];

UInt32 sampleRate,channelCount;

NSArray* formatDesc = songTrack.formatDescriptions;

for(unsigned int i = 0; i < [formatDesc count]; ++i) {

CMAudioFormatDescriptionRef item = (CMAudioFormatDescriptionRef)[formatDesc objectAtIndex:i];

const AudioStreamBasicDescription* fmtDesc = CMAudioFormatDescriptionGetStreamBasicDescription (item);

if(fmtDesc ) {

sampleRate = fmtDesc->mSampleRate;

channelCount = fmtDesc->mChannelsPerFrame;

// NSLog(@"channels:%u, bytes/packet: %u, sampleRate %f",fmtDesc->mChannelsPerFrame, fmtDesc->mBytesPerPacket,fmtDesc->mSampleRate);

}

}

UInt32 bytesPerSample = 2 * channelCount;

Float32 normalizeMax = noiseFloor;

NSLog(@"normalizeMax = %f",normalizeMax);

NSMutableData * fullSongData = [[NSMutableData alloc] init];

[reader startReading];

UInt64 totalBytes = 0;

Float64 totalLeft = 0;

Float64 totalRight = 0;

Float32 sampleTally = 0;

NSInteger samplesPerPixel = sampleRate / 50;

while (reader.status == AVAssetReaderStatusReading){

AVAssetReaderTrackOutput * trackOutput = (AVAssetReaderTrackOutput *)[reader.outputs objectAtIndex:0];

CMSampleBufferRef sampleBufferRef = [trackOutput copyNextSampleBuffer];

if (sampleBufferRef){

CMBlockBufferRef blockBufferRef = CMSampleBufferGetDataBuffer(sampleBufferRef);

size_t length = CMBlockBufferGetDataLength(blockBufferRef);

totalBytes += length;

NSAutoreleasePool *wader = [[NSAutoreleasePool alloc] init];

NSMutableData * data = [NSMutableData dataWithLength:length];

CMBlockBufferCopyDataBytes(blockBufferRef, 0, length, data.mutableBytes);

SInt16 * samples = (SInt16 *) data.mutableBytes;

int sampleCount = length / bytesPerSample;

for (int i = 0; i < sampleCount ; i ++) {

Float32 left = (Float32) *samples++;

left = decibel(left);

left = minMaxX(left,noiseFloor,0);

totalLeft += left;

Float32 right;

if (channelCount==2) {

right = (Float32) *samples++;

right = decibel(right);

right = minMaxX(right,noiseFloor,0);

totalRight += right;

}

sampleTally++;

if (sampleTally > samplesPerPixel) {

left = totalLeft / sampleTally;

if (left > normalizeMax) {

normalizeMax = left;

}

// NSLog(@"left average = %f, normalizeMax = %f",left,normalizeMax);

[fullSongData appendBytes:&left length:sizeof(left)];

if (channelCount==2) {

right = totalRight / sampleTally;

if (right > normalizeMax) {

normalizeMax = right;

}

[fullSongData appendBytes:&right length:sizeof(right)];

}

totalLeft = 0;

totalRight = 0;

sampleTally = 0;

}

}

[wader drain];

CMSampleBufferInvalidate(sampleBufferRef);

CFRelease(sampleBufferRef);

}

}

NSData * finalData = nil;

if (reader.status == AVAssetReaderStatusFailed || reader.status == AVAssetReaderStatusUnknown){

// Something went wrong. Handle it.

}

if (reader.status == AVAssetReaderStatusCompleted){

// You're done. It worked.

NSLog(@"rendering output graphics using normalizeMax %f",normalizeMax);

UIImage *test = [self audioImageLogGraph:(Float32 *) fullSongData.bytes

normalizeMax:normalizeMax

sampleCount:fullSongData.length / (sizeof(Float32) * 2)

channelCount:2

imageHeight:100];

finalData = imageToData(test);

}

[fullSongData release];

[reader release];

return finalData;

}

@end

Warning: date(): Invalid date.timezone value 'Europe/Kyiv', we selected the timezone 'UTC' for now. in /var/www/agent_stack/data/www/doraprojects.net/template/agent.layouts/content.php on line 54

2017-06-27 09:54:48

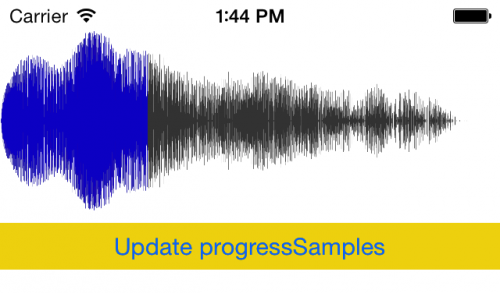

Przeczytałem twoje pytanie i stworzyłem do tego kontrolę. Wygląda tak:

Kod tutaj:

Https://github.com/fulldecent/FDWaveformView

Dyskusja tutaj:

Https://www.cocoacontrols.com/controls/fdwaveformview

Aktualizacja 2015-01-29 : ten projekt jest silny i tworzy spójne wydania. Dzięki za tak za całą ekspozycję!

Warning: date(): Invalid date.timezone value 'Europe/Kyiv', we selected the timezone 'UTC' for now. in /var/www/agent_stack/data/www/doraprojects.net/template/agent.layouts/content.php on line 54

2015-01-29 16:23:22

Mogę podać odniesienie do tego, który zaimplementowałem w mojej aplikacji. To był przykład apple. Oto przykład AurioTouch który analizuje 3 rodzaje dźwięku. Apple nadal nie dostarczył do bezpośredniej analizy fal audio... tak więc ten przykład wykorzystuje również mikrofon do analizy dźwięku...

Możesz też zobaczyć jeszcze jeden przykład użycia takiej amplitudy: SpeakHere of Apple

Warning: date(): Invalid date.timezone value 'Europe/Kyiv', we selected the timezone 'UTC' for now. in /var/www/agent_stack/data/www/doraprojects.net/template/agent.layouts/content.php on line 54

2011-11-28 16:30:36

Jest to kod, którego użyłem do konwersji moich danych audio (pliku audio) na reprezentację zmiennoprzecinkową i zapisany w tablicy.

-(void) PrintFloatDataFromAudioFile {

NSString * name = @"Filename"; //YOUR FILE NAME

NSString * source = [[NSBundle mainBundle] pathForResource:name ofType:@"m4a"]; // SPECIFY YOUR FILE FORMAT

const char *cString = [source cStringUsingEncoding:NSASCIIStringEncoding];

CFStringRef str = CFStringCreateWithCString(

NULL,

cString,

kCFStringEncodingMacRoman

);

CFURLRef inputFileURL = CFURLCreateWithFileSystemPath(

kCFAllocatorDefault,

str,

kCFURLPOSIXPathStyle,

false

);

ExtAudioFileRef fileRef;

ExtAudioFileOpenURL(inputFileURL, &fileRef);

AudioStreamBasicDescription audioFormat;

audioFormat.mSampleRate = 44100; // GIVE YOUR SAMPLING RATE

audioFormat.mFormatID = kAudioFormatLinearPCM;

audioFormat.mFormatFlags = kLinearPCMFormatFlagIsFloat;

audioFormat.mBitsPerChannel = sizeof(Float32) * 8;

audioFormat.mChannelsPerFrame = 1; // Mono

audioFormat.mBytesPerFrame = audioFormat.mChannelsPerFrame * sizeof(Float32); // == sizeof(Float32)

audioFormat.mFramesPerPacket = 1;

audioFormat.mBytesPerPacket = audioFormat.mFramesPerPacket * audioFormat.mBytesPerFrame; // = sizeof(Float32)

// 3) Apply audio format to the Extended Audio File

ExtAudioFileSetProperty(

fileRef,

kExtAudioFileProperty_ClientDataFormat,

sizeof (AudioStreamBasicDescription), //= audioFormat

&audioFormat);

int numSamples = 1024; //How many samples to read in at a time

UInt32 sizePerPacket = audioFormat.mBytesPerPacket; // = sizeof(Float32) = 32bytes

UInt32 packetsPerBuffer = numSamples;

UInt32 outputBufferSize = packetsPerBuffer * sizePerPacket;

// So the lvalue of outputBuffer is the memory location where we have reserved space

UInt8 *outputBuffer = (UInt8 *)malloc(sizeof(UInt8 *) * outputBufferSize);

AudioBufferList convertedData ;//= malloc(sizeof(convertedData));

convertedData.mNumberBuffers = 1; // Set this to 1 for mono

convertedData.mBuffers[0].mNumberChannels = audioFormat.mChannelsPerFrame; //also = 1

convertedData.mBuffers[0].mDataByteSize = outputBufferSize;

convertedData.mBuffers[0].mData = outputBuffer; //

UInt32 frameCount = numSamples;

float *samplesAsCArray;

int j =0;

double floatDataArray[882000] ; // SPECIFY YOUR DATA LIMIT MINE WAS 882000 , SHOULD BE EQUAL TO OR MORE THAN DATA LIMIT

while (frameCount > 0) {

ExtAudioFileRead(

fileRef,

&frameCount,

&convertedData

);

if (frameCount > 0) {

AudioBuffer audioBuffer = convertedData.mBuffers[0];

samplesAsCArray = (float *)audioBuffer.mData; // CAST YOUR mData INTO FLOAT

for (int i =0; i<1024 /*numSamples */; i++) { //YOU CAN PUT numSamples INTEAD OF 1024

floatDataArray[j] = (double)samplesAsCArray[i] ; //PUT YOUR DATA INTO FLOAT ARRAY

printf("\n%f",floatDataArray[j]); //PRINT YOUR ARRAY'S DATA IN FLOAT FORM RANGING -1 TO +1

j++;

}

}

}}

Warning: date(): Invalid date.timezone value 'Europe/Kyiv', we selected the timezone 'UTC' for now. in /var/www/agent_stack/data/www/doraprojects.net/template/agent.layouts/content.php on line 54

2014-01-15 11:02:59

To moja odpowiedź, Thx all geek

obj-c

Oto kod:

-(void) listenerData:(NSNotification *) notify

{

int resnum=112222;

unsigned int bitsum=0;

for(int i=0;i<4;i++)

{

bitsum+=(resnum>>(i*8))&0xff;

}

bitsum=bitsum&0xff;

NSString * check=[NSString stringWithFormat:@"%x %x",resnum,bitsum];

check=nil;

self.drawData=notify.object;``

[self setNeedsDisplay];

}

-(void)drawRect:(CGRect)rect

{

NSArray *data=self.drawData;

NSData *tdata=[data objectAtIndex:0];

double *srcdata=(double*)tdata.bytes;

int datacount=tdata.length/sizeof(double);

tdata=[data objectAtIndex:1];

double *coveddata=(double*)tdata.bytes;

CGContextRef context=UIGraphicsGetCurrentContext();

CGRect bounds=self.bounds;

CGContextClearRect(context, bounds);

CGFloat midpos=bounds.size.height/2;

CGContextBeginPath(context);

const double scale=1.0/100;

CGFloat step=bounds.size.width/(datacount-1);

CGContextMoveToPoint(context, 0, midpos);

CGFloat xpos=0;

for(int i=0;i<datacount;i++)

{

CGContextAddLineToPoint(context, xpos, midpos-srcdata[i]*scale);

xpos+=step;

}

CGContextAddLineToPoint(context, bounds.size.width, midpos);

CGContextClosePath(context);

CGContextSetRGBFillColor(context, 1.0, 0.0, 0.0, 1.0);

CGContextFillPath(context);

CGContextBeginPath(context);

const double scale2=1.0/100;

CGContextMoveToPoint(context, 0, midpos);

xpos=0;

for(int i=0;i<datacount;i++)

{

CGContextAddLineToPoint(context, xpos, midpos+coveddata[i]*scale2);

xpos+=step;

}

CGContextAddLineToPoint(context, bounds.size.width, midpos);

CGContextClosePath(context);

CGContextSetRGBFillColor(context, 1.0, 0.0, 1.0, 1.0);

CGContextFillPath(context);

}

Warning: date(): Invalid date.timezone value 'Europe/Kyiv', we selected the timezone 'UTC' for now. in /var/www/agent_stack/data/www/doraprojects.net/template/agent.layouts/content.php on line 54

2013-07-25 08:07:26